Author:

Austin McDaniel

Date:

Apr 13, 2026

I Wore an AI Wearable to a Conference. Here's What Actually Happened.

I go to a lot of conferences. RSAC, Black Hat, smaller founder events — the usual circuit. Every year it's the same problem: you have 30 incredible conversations in three days, and by the time you're on the flight home, half of them are gone. Names blur. That killer insight someone dropped at the happy hour? Lost.

For the last two years I've been using Fireflies AI for meeting notes, and it's been solid — for meetings. But conferences aren't meetings. They're hallway conversations, demo walkthroughs, dinners where someone casually mentions a market shift that rewires how you think about a whole vertical. You can't ask someone to hop on a Zoom so your transcription bot can join.

So this year I went looking for something better. A note taker I could wear.

The Search

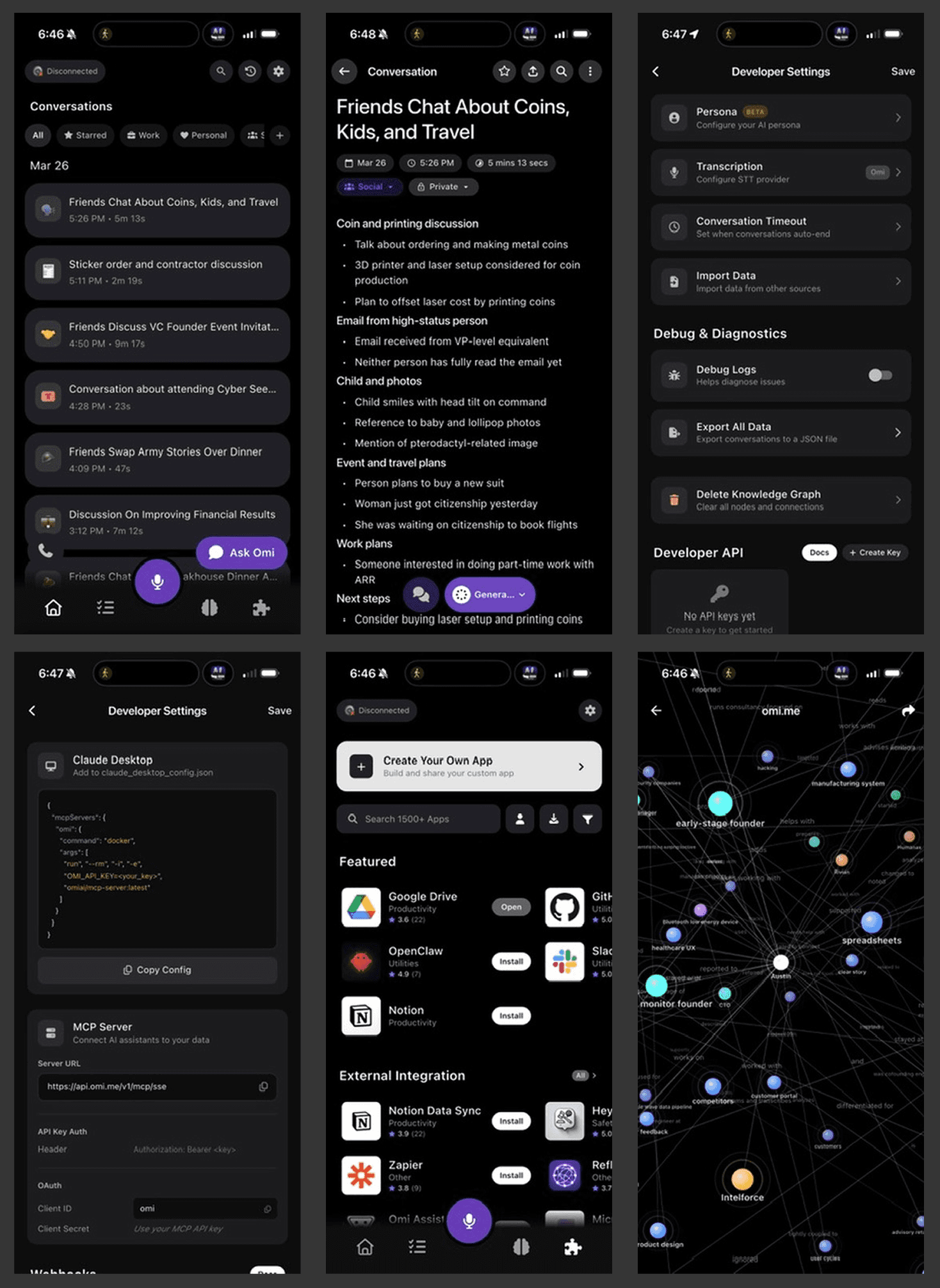

I looked at a bunch of them — Plaud, Friend, Soundcore, a few others. They all take slightly different approaches, but the one that stood out was Omi. Here's why: unlike devices like Plaud that record in chunks, Omi records 24/7. That matters when you're trying to capture the stuff you didn't know was important at the time. The throwaway comment at a booth. The pattern you only recognize three conversations later. Continuous capture is the only way you get that.

I'll be blunt — it's also cheap. Low hardware cost, no mandatory subscription, and they've got an open API with MCP support and full data controls. For a technical founder who wants to actually do something with the data, that's the right set of trade-offs.

What Worked

The API and data story is genuinely good. You own your data, you can pipe it wherever you want, and the MCP integration means you can wire it into your own workflows without begging for an enterprise contract. For a product at this stage, that's impressive. Most wearable AI companies lock everything behind a subscription and a proprietary app. Omi doesn't.

And honestly, the 24/7 capture model is the right idea. When it works, you get this ambient record of your day that surfaces things you would've missed. I caught a few moments reviewing transcripts later that I'd completely forgotten — the kind of context that's gold when you're trying to map out what's actually happening in a market.

What Didn't

Here's the thing — "when it works" is doing a lot of heavy lifting in that last paragraph.

The Bluetooth disconnects are frequent. You'll pull out your phone and realize the device hasn't been connected for the last 20 minutes. At a conference, 20 minutes is two conversations. Gone.

The bigger problem is signal-to-noise. Omi captures everything, but it doesn't capture enough context to make most of it useful. Audio-only transcription of a loud expo floor is rough. You get fragments, misattributions, crosstalk — and no way to reconstruct who said what or what you were looking at when they said it. The transcript becomes a wall of text you have to manually sift through, which defeats the purpose.

And the app itself just isn't polished. Bugs everywhere. It feels like an early beta that shipped as a product. I get it — hardware startups move fast and break things — but the experience gap between "cool demo" and "tool I trust" is real.

The Verdict

Will I use it at the next conference? Probably.

Would I buy it again? Probably not.

Does it have potential? Not in its current form.

Here's my unsolicited advice for the Omi team: audio alone isn't enough. To make ambient capture actually useful, the device needs vision. A camera, even a low-res one, that can tag who you're talking to, what you're looking at, and where you are when a conversation happens. That's the context layer that turns a messy transcript into actionable intelligence. Without it, you're recording a podcast of your life with no show notes.

The wearable AI space is going to be massive — but only for the products that figure out that capture without context is just noise. The companies that crack multi-modal ambient intelligence — audio, visual, spatial — those are the ones that'll own this category.

If you're building in this space and trying to figure out the product design side of wearable AI, that's exactly the kind of problem we work on at Good Code. We spend a lot of time helping founders turn ambitious technical bets into products people actually use. The gap between "records everything" and "surfaces what matters" is a design problem, not just an engineering one.